Hand/gesture detection

Phase one

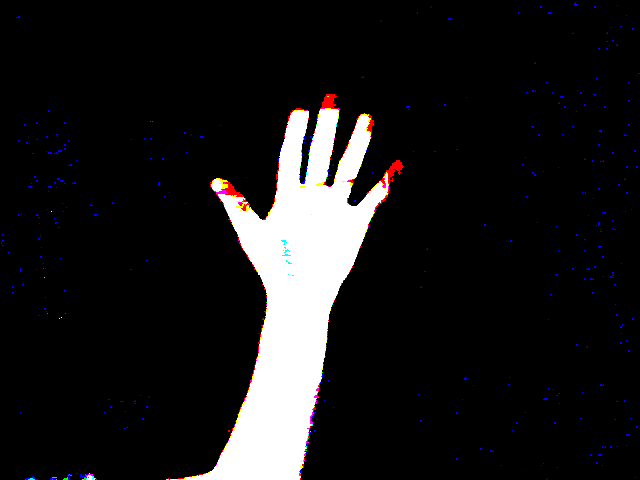

Our first goal in phase one was to figure out how to get a clear representation of our hand minus the background so that we have a simplified scene in which to run gesture detection.

|

Super simple task. I record the first frame captured when the program starts as the background, and subtract it from every frame afterwards. This results in the bottom image to the left.

Current problems: Notice how the tip of my middle finger is sort of cut off, and how it almost looks like there is a solid dark line going through my pinky finger. Although slight, these might become an issue later on so we will be dealing with this issue in phase two. |

phase two

We have a basic description of what our "hand" is recorded in an image, but to make it much easier for the computer to understand lets change this image to comprised of either "0" pixels (black) or "255" pixels (white)

|

At first glance, we should just be able to truncate any pixel values lower than a certain xMin to 0, and increase any pixel values higher than a certain xMax to 255. Doing this on the colored images results in the comparison for all 3 color channels, and you will notice that the tips of my fingers are red. This is because the trim of my closet has a red value of above 25, therefore it is rendering my fingers as (255, 0, 0) rgb. You can also notice some noise of blue all around the image.

|

After many back and forth attempts at getting an accurate representation of my hand, I really started to notice how annoying background features can be if they match too closely to your skin. I did, however find a solution that gave me just about what I wanted. While the final result isn't an exact replica of my hand, it is a result that the computer can take and do accurate computations on.

|

Note: Pay close attention to the location of my closet trim in these two gifs.

The top image is the original, where I convert the camera feed to greyscale and simply say if pixel < 25 then pixel == 0; else pixel == 255. You can notice in this image how my fingers get cut in half which I have a feeling will cause problems later, so I solved that problem. The bottom image is after I solved the problem. While it doesn't look as elegant to humans because it is rather "square" to be a human hand, the computer will be able to use this much more efficiently. |

Phase three

We now have a model for our computer to be able to work based off of, so lets start generating some data.

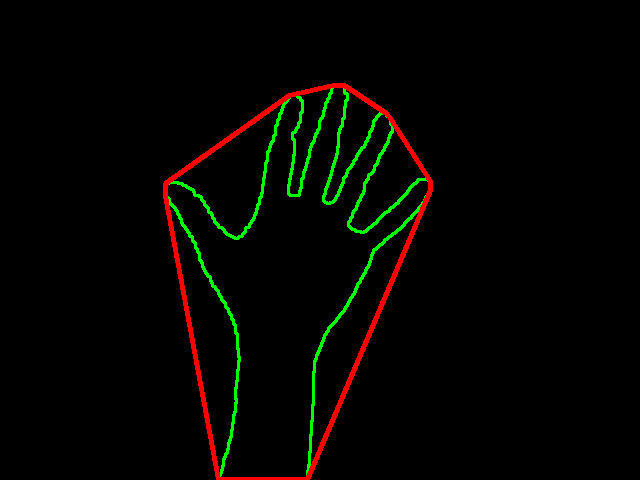

Now that we have simplified our data as far as we have, we can start using the data to detect what is going on in our scene. I'm going to start with detecting the number of fingers I am holding up.

|

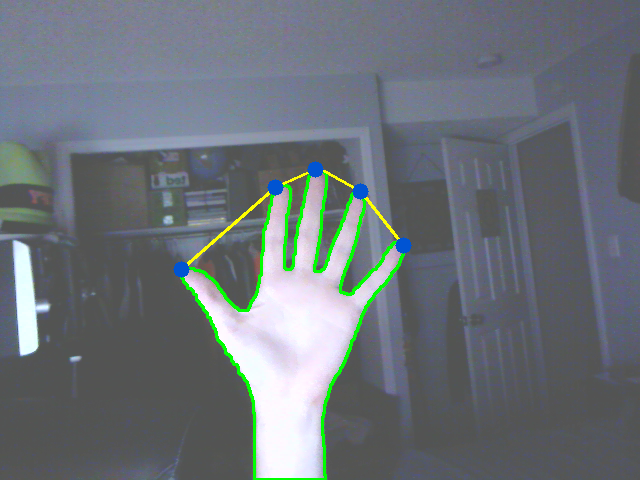

From my research I discovered that you can use contour defects to find the point in between figures as seen in the top image. The blue dots are the points calculated by the defects, and the white dot is the average of them.

However, I wasn't quite a fan of this technique considering it would be much more interesting to have our points be at the finger tips. So I used a similar technique to create the bottom image. A problem that appears here that I couldn't quite figure out an effective way to solve that didn't take 24 hours by itself was how to track a single finger. This solution works wonders for 2, 3, 4, and 5 fingers but it won't detect a singular finger. Now for phase 4, I'd like to create some simple demonstration that shows how this data (IE input from the user) can be used to control elements in our scene. |

Phase four

Nothing too closely related to computer vision here, but it is neat nonetheless.

|

3 simple controls to interact with the square. You can move it around with 2 fingers, you can make it grow with 3 fingers, and you can make it shrink back down with 2 fingers.

There is some back end stuff going on that "smooths" the number of fingers you have on the screen so that you don't accidentally do something you didn't intend. It takes the most occurring number of fingers over the last 10 frames to decide what interaction you want to have with the cube. |

Full code

Main.py

GestureDetection.py